10 Multiple Regression

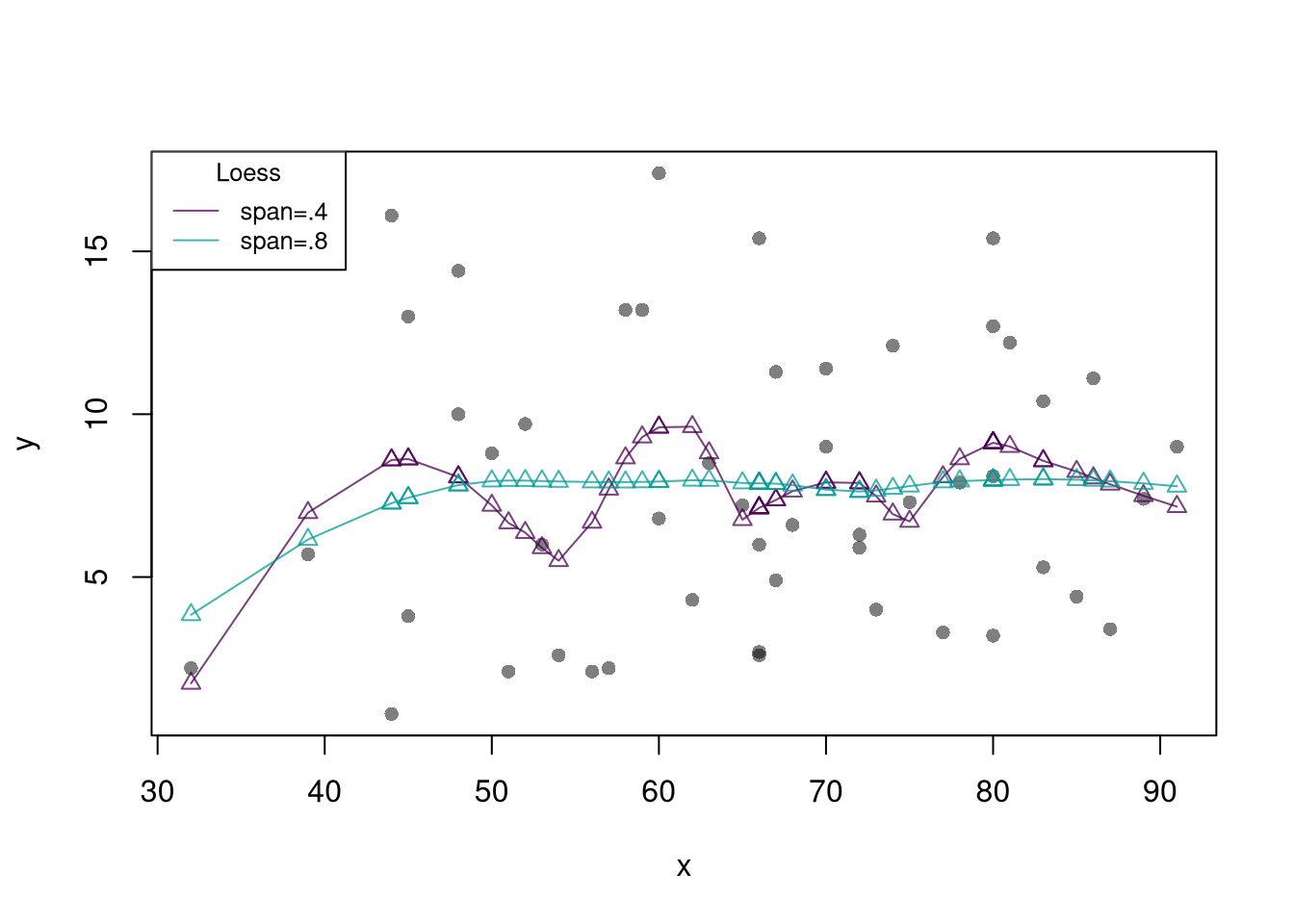

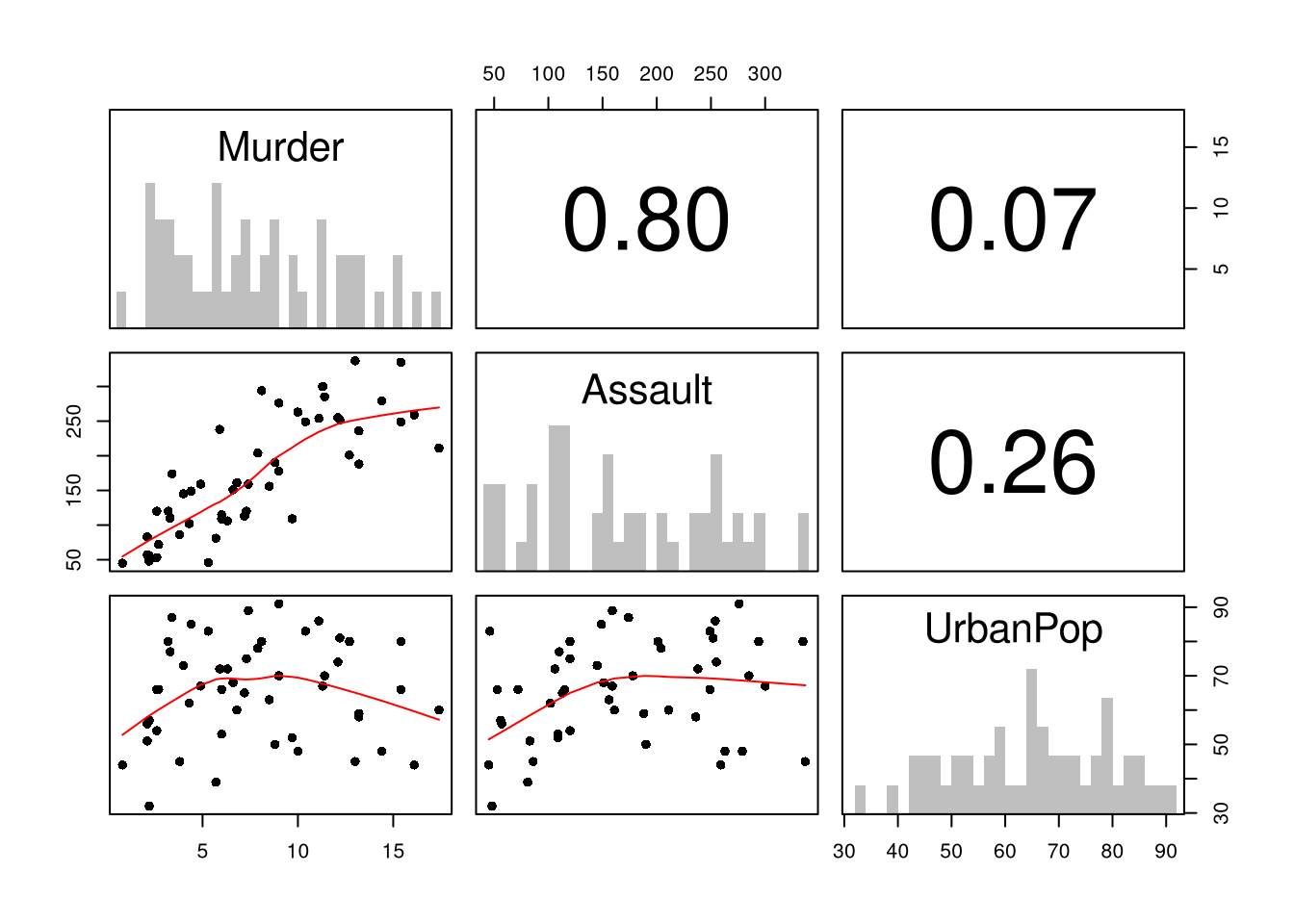

First, note that you can summarize a dataset with multiple variables using the previous tools.

Code

# Inspect Dataset on police arrests for the USA in 1973

head(USArrests)

## Murder Assault UrbanPop Rape

## Alabama 13.2 236 58 21.2

## Alaska 10.0 263 48 44.5

## Arizona 8.1 294 80 31.0

## Arkansas 8.8 190 50 19.5

## California 9.0 276 91 40.6

## Colorado 7.9 204 78 38.7

library(psych)

pairs.panels( USArrests[,c('Murder','Assault','UrbanPop')],

hist.col=grey(0,.25), breaks=30, density=F, hist.border=NA, # Diagonal

ellipses=F, rug=F, smoother=F, pch=16, col='red' # Lower Triangle

)

10.1 Multiple Linear Regression

With \(K\) variables, the linear model is \[ y_i=\beta_0+\beta_1 x_{i1}+\beta_2 x_{i2}+\ldots+\beta_K x_{iK}+\epsilon_i = [1~~ x_{i1} ~~...~~ x_{iK}] \beta + \epsilon_i \] and our objective is \[ min_{\beta} \sum_{i=1}^{N} (\epsilon_i)^2. \]

Denoting \[ y= \begin{pmatrix} y_{1} \\ \vdots \\ y_{N} \end{pmatrix} \quad \textbf{X} = \begin{pmatrix} 1 & x_{11} & ... & x_{1K} \\ & \vdots & & \\ 1 & x_{N1} & ... & x_{NK} \end{pmatrix}, \] we can also write the model and objective in matrix form \[ y=\textbf{X}\beta+\epsilon\\ min_{\beta} (\epsilon' \epsilon) \]

Minimizing the squared errors yields coefficient estimates \[ \hat{\beta}=(\textbf{X}'\textbf{X})^{-1}\textbf{X}'y \] and predictions \[ \hat{y}=\textbf{X} \hat{\beta} \\ \hat{\epsilon}=y - \hat{y} \\ \]

Code

# Manually Compute

Y <- USArrests[,'Murder']

X <- USArrests[,c('Assault','UrbanPop')]

X <- as.matrix(cbind(1,X))

XtXi <- solve(t(X)%*%X)

Bhat <- XtXi %*% (t(X)%*%Y)

c(Bhat)

## [1] 3.20715340 0.04390995 -0.04451047

# Check

reg <- lm(Murder~Assault+UrbanPop, data=USArrests)

coef(reg)

## (Intercept) Assault UrbanPop

## 3.20715340 0.04390995 -0.04451047To measure the ``Goodness of fit’’ of the model, we can again plot our predictions.

We can also again compute sums of squared errors. Adding random data may sometimes improve the fit, however, so we adjust the \(R^2\) by the number of covariates \(K\). \[ R^2 = \frac{ESS}{TSS}=1-\frac{RSS}{TSS}\\ R^2_{\text{adj.}} = 1-\frac{N-1}{N-K}(1-R^2) \]

Code

ksims <- 1:30

for(k in ksims){

USArrests[,paste0('R',k)] <- runif(nrow(USArrests),0,20)

}

reg_sim <- lapply(ksims, function(k){

rvars <- c('Assault','UrbanPop', paste0('R',1:k))

rvars2 <- paste0(rvars, collapse='+')

reg_k <- lm( paste0('Murder~',rvars2), data=USArrests)

})

R2_sim <- sapply(reg_sim, function(reg_k){ summary(reg_k)$r.squared })

R2adj_sim <- sapply(reg_sim, function(reg_k){ summary(reg_k)$adj.r.squared })

plot.new()

plot.window(xlim=c(0,30), ylim=c(0,1))

points(ksims, R2_sim)

points(ksims, R2adj_sim, pch=16)

axis(1)

axis(2)

mtext(expression(R^2),2, line=3)

mtext('Additional Random Covariates', 1, line=3)

legend('topleft', horiz=T,

legend=c('Undjusted', 'Adjusted'), pch=c(1,16))

10.2 Factor Variables

So far, we have discussed cardinal data where the difference between units always means the same thing: e.g., \(4-3=2-1\). There are also factor variables

- Ordered: refers to Ordinal data. The difference between units means something, but not always the same thing. For example, \(4th - 3rd \neq 2nd - 1st\).

- Unordered: refers to Categorical data. The difference between units is meaningless. For example, \(B-A=?\)

To analyze either factor, we often convert them into indicator variables or dummies; \(D_{c}=\mathbf{1}( Factor = c)\). One common case is if you have observations of individuals over time periods, then you may have two factor variables. An unordered factor that indicates who an individual is; for example \(D_{i}=\mathbf{1}( Individual = i)\), and an order factor that indicates the time period; for example \(D_{t}=\mathbf{1}( Time \in [month~ t, month~ t+1) )\). There are many other cases you see factor variables, including spatial ID’s in purely cross sectional data.

Be careful not to handle categorical data as if they were cardinal. E.g., generate city data with Leipzig=1, Lausanne=2, LosAngeles=3, … and then include city as if it were a cardinal number (that’s a big no-no). The same applied to ordinal data; PopulationLeipzig=2, PopulationLausanne=3, PopulationLosAngeles=1.

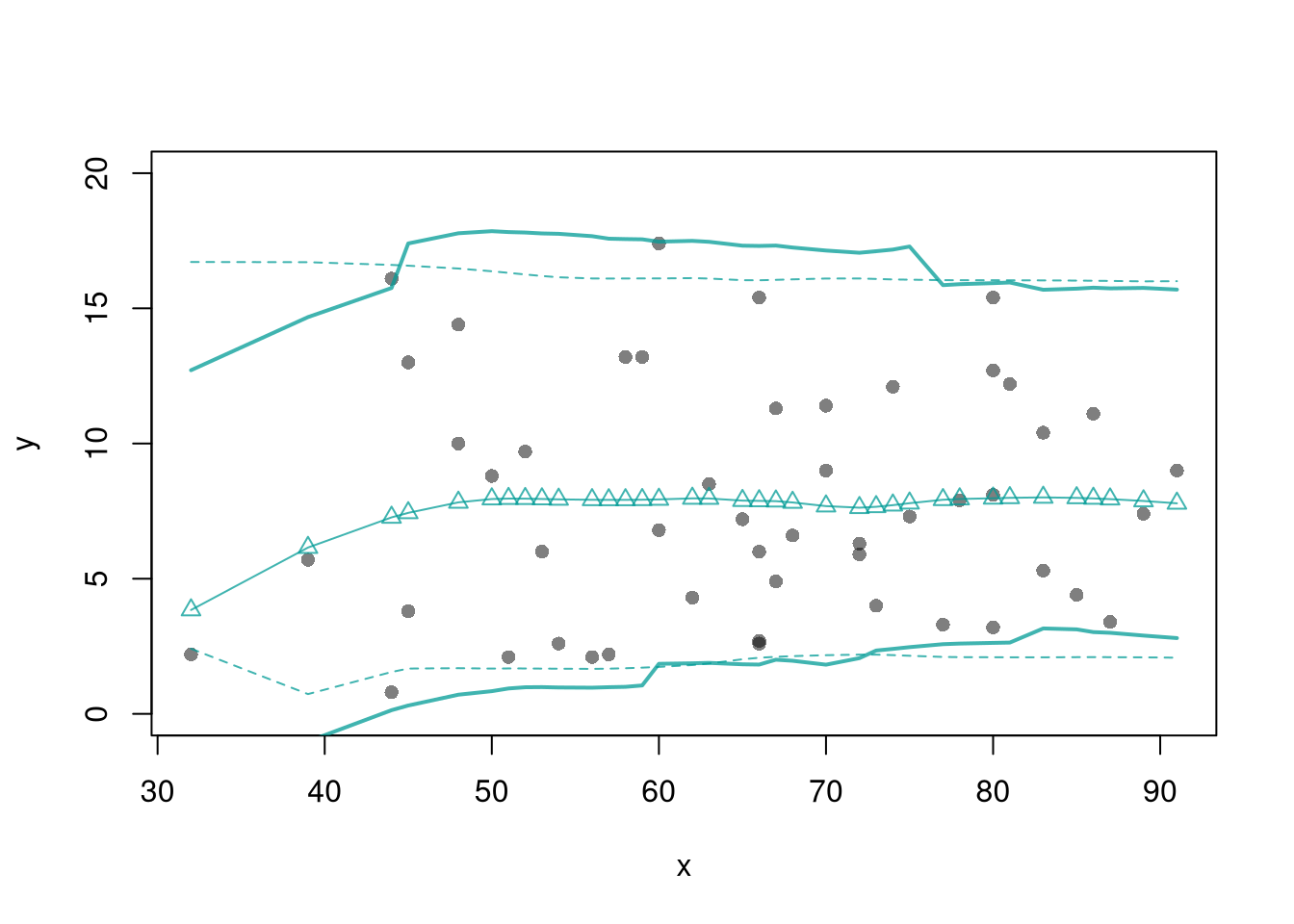

Code

With factors, you can still include them in the design matrix of an OLS regression \[ y_{it} = x_{it} \beta_{x} + d_{t}\beta_{t} \] When, as commonly done, the factors are modeled as being additively seperable, they are modeled “fixed effects”.5 Simply including the factors into the OLS regression yields a “dummy variable” fixed effects estimator. Hansen Econometrics, Theorem 17.1: The fixed effects estimator of \(\beta\) algebraically equals the dummy variable estimator of \(\beta\). The two estimators have the same residuals.

Code

library(fixest)

fe_reg1 <- feols(y~x|fo+fu, dat_f)

coef(fe_reg1)

## x

## 0.7044831

fixef(fe_reg1)[1:2]

## $fo

## 0 1 2 3 4

## 11.19088 12.69812 17.61071 26.60199 42.54035

##

## $fu

## A B

## 0.00000 -23.86648

# Compare Coefficients

fe_reg0 <- lm(y~-1+x+fo+fu, dat_f)

coef( fe_reg0 )

## x fo0 fo1 fo2 fo3 fo4

## 0.7044831 11.1908783 12.6981230 17.6107149 26.6019901 42.5403517

## fuB

## -23.8664785With fixed effects, we can also compute averages for each group and construct a between estimator: \(\bar{y}_i = \alpha + \bar{x}_i \beta\). Or we can subtract the average from each group to construct a within estimator: \((y_{it} - \bar{y}_i) = (x_{it}-\bar{x}_i)\beta\).

But note that many factors are not additively separable. This is easy to check with an F-test;

Code

reg0 <- lm(y~-1+x+fo+fu, dat_f)

reg1 <- lm(y~-1+x+fo*fu, dat_f)

reg2 <- lm(y~-1+x*fo*fu, dat_f)

anova(reg0, reg2)

## Analysis of Variance Table

##

## Model 1: y ~ -1 + x + fo + fu

## Model 2: y ~ -1 + x * fo * fu

## Res.Df RSS Df Sum of Sq F Pr(>F)

## 1 993 82085

## 2 980 6390 13 75696 893.04 < 2.2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

anova(reg0, reg1, reg2)

## Analysis of Variance Table

##

## Model 1: y ~ -1 + x + fo + fu

## Model 2: y ~ -1 + x + fo * fu

## Model 3: y ~ -1 + x * fo * fu

## Res.Df RSS Df Sum of Sq F Pr(>F)

## 1 993 82085

## 2 989 11233 4 70852 2716.666 < 2.2e-16 ***

## 3 980 6390 9 4844 82.544 < 2.2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 110.3 Variability Estimates

To estimate the variability of our estimates, we can use the same data-driven methods introduced in the last section. As before, we can conduct independent hypothesis tests using t-values.

We can also conduct joint tests that account for interdependancies in our estimates. For example, to test whether two coefficients both equal \(0\), we bootstrap the joint distribution of coefficients.

Code

# Bootstrap SE's

boots <- 1:399

boot_regs <- lapply(boots, function(b){

b_id <- sample( nrow(USArrests), replace=T)

xy_b <- USArrests[b_id,]

reg_b <- lm(Murder~Assault+UrbanPop, dat=xy_b)

})

boot_coefs <- sapply(boot_regs, coef)

# Recenter at 0 to impose the null

#boot_means <- rowMeans(boot_coefs)

#boot_coefs0 <- sweep(boot_coefs, MARGIN=1, STATS=boot_means)Code

boot_coef_df <- as.data.frame(cbind(ID=boots, t(boot_coefs)))

fig <- plotly::plot_ly(boot_coef_df,

type = 'scatter', mode = 'markers',

x = ~UrbanPop, y = ~Assault,

text = ~paste('<b> bootstrap dataset: ', ID, '</b>',

'<br>Coef. Urban :', round(UrbanPop,3),

'<br>Coef. Murder :', round(Assault,3),

'<br>Coef. Intercept :', round(`(Intercept)`,3)),

hoverinfo='text',

showlegend=F,

marker=list( color='rgba(0, 0, 0, 0.5)'))

fig <- plotly::layout(fig,

showlegend=F,

title='Joint Distribution of Coefficients (under the null)',

xaxis = list(title='UrbanPop Coefficient'),

yaxis = list(title='Assualt Coefficient'))

fig10.4 Hypothesis Tests

F-statistic.

We can also use an \(F\) test for any \(q\) hypotheses. Specifically, when \(q\) hypotheses restrict a model, the degrees of freedom drop from \(k_{u}\) to \(k_{r}\) and the residual sum of squares \(RSS=\sum_{i}(y_{i}-\widehat{y}_{i})^2\) typically increases. We compute the statistic \[ F_{q} = \frac{(RSS_{r}-RSS_{u})/(k_{u}-k_{r})}{RSS_{u}/(N-k_{u})} \]

If you test whether all \(K\) variables are significant, the restricted model is a simple intercept and \(RSS_{r}=TSS\), and \(F_{q}\) can be written in terms of \(R^2\): \(F_{K} = \frac{R^2}{1-R^2} \frac{N-K}{K-1}\). The first fraction is the relative goodness of fit, and the second fraction is an adjustment for degrees of freedom (similar to how we adjusted the \(R^2\) term before).

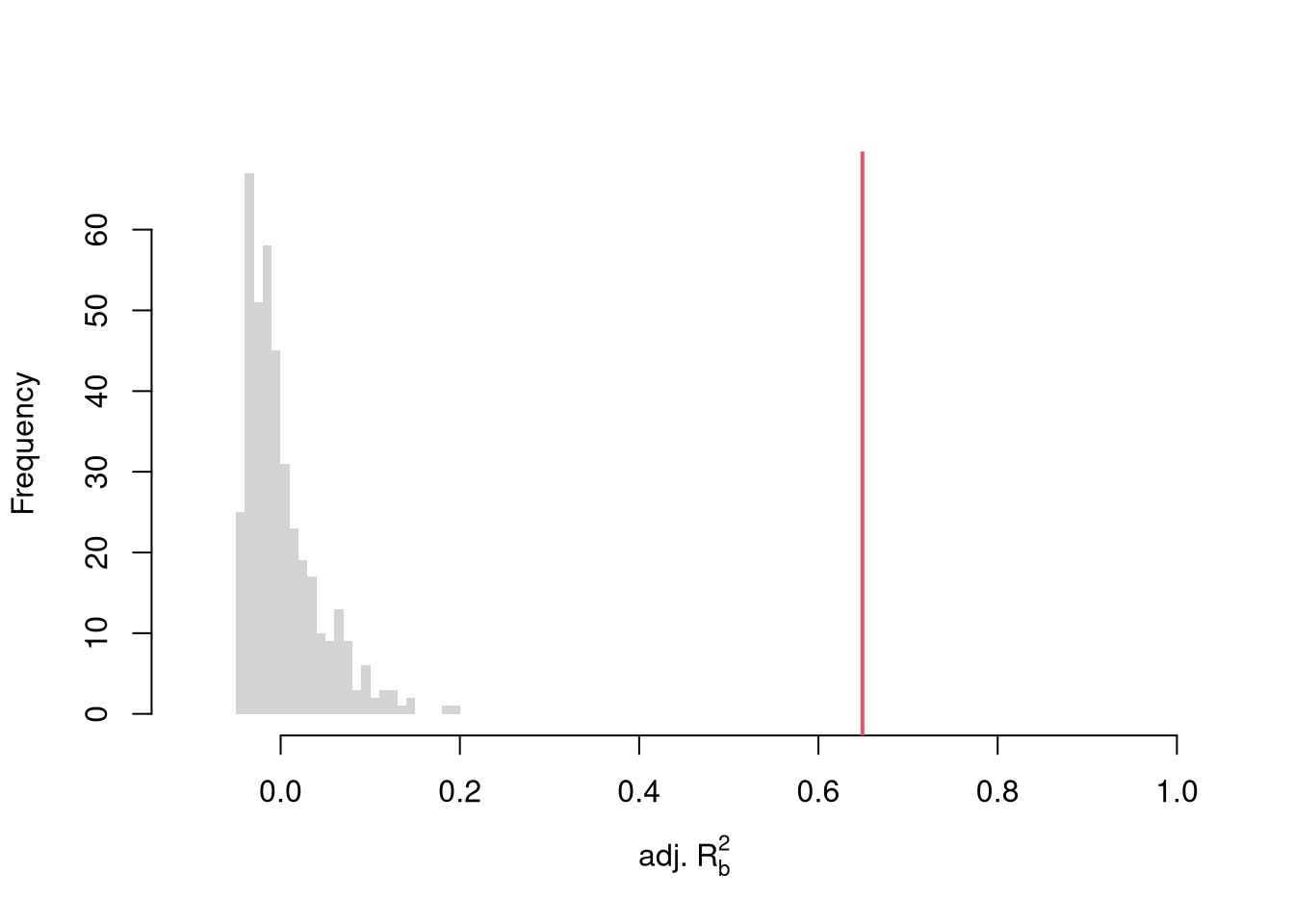

To conduct a hypothesis test, first compute a null distribution by randomly reshuffling the outcomes and recompute the \(F\) statistic, and then compare how often random data give something as extreme as your initial statistic. For some intuition on this F test, examine how the adjusted \(R^2\) statistic varies with bootstrap samples.

Code

# Bootstrap under the null

boots <- 1:399

boot_regs0 <- lapply(boots, function(b){

# Generate bootstrap sample

xy_b <- USArrests

b_id <- sample( nrow(USArrests), replace=T)

# Impose the null

xy_b$Murder <- xy_b$Murder[b_id]

# Run regression

reg_b <- lm(Murder~Assault+UrbanPop, dat=xy_b)

})

# Get null distribution for adjusted R2

R2adj_sim0 <- sapply(boot_regs0, function(reg_k){

summary(reg_k)$adj.r.squared })

hist(R2adj_sim0, xlim=c(-.1,1), breaks=25, border=NA,

main='', xlab=expression('adj.'~R[b]^2))

# Compare to initial statistic

abline(v=summary(reg)$adj.r.squared, lwd=2, col=2)

Note that hypothesis testing is not to be done routinely, as additional complications arise when testing multiple hypothesis sequentially.

Under some additional assumptions \(F_{q}\) follows an F-distribution. For more about F-testing, see https://online.stat.psu.edu/stat501/lesson/6/6.2 and https://www.econometrics.blog/post/understanding-the-f-statistic/

10.5 Coefficient Interpretation

Notice that we have gotten pretty far without actually trying to meaningfully interpret regression coefficients. That is because the above procedure will always give us number, regardless as to whether the true data generating process is linear or not. So, to be cautious, we have been interpreting the regression outputs while being agnostic as to how the data are generated. We now consider a special situation where we know the data are generated according to a linear process and are only uncertain about the parameter values.

If the data generating process is \[ y=X\beta + \epsilon\\ \mathbb{E}[\epsilon | X]=0, \] then we have a famous result that lets us attach a simple interpretation of OLS coefficients as unbiased estimates of the effect of X: \[ \hat{\beta} = (X'X)^{-1}X'y = (X'X)^{-1}X'(X\beta + \epsilon) = \beta + (X'X)^{-1}X'\epsilon\\ \mathbb{E}\left[ \hat{\beta} \right] = \mathbb{E}\left[ (X'X)^{-1}X'y \right] = \beta + (X'X)^{-1}\mathbb{E}\left[ X'\epsilon \right] = \beta \]

Generate a simulated dataset with 30 observations and two exogenous variables. Assume the following relationship: \(y_{i} = \beta_0 + \beta_1 x_{i1} + \beta_2 x_{i2} + \epsilon_i\) where the variables and the error term are realizations of the following data generating processes (DGP):

Code

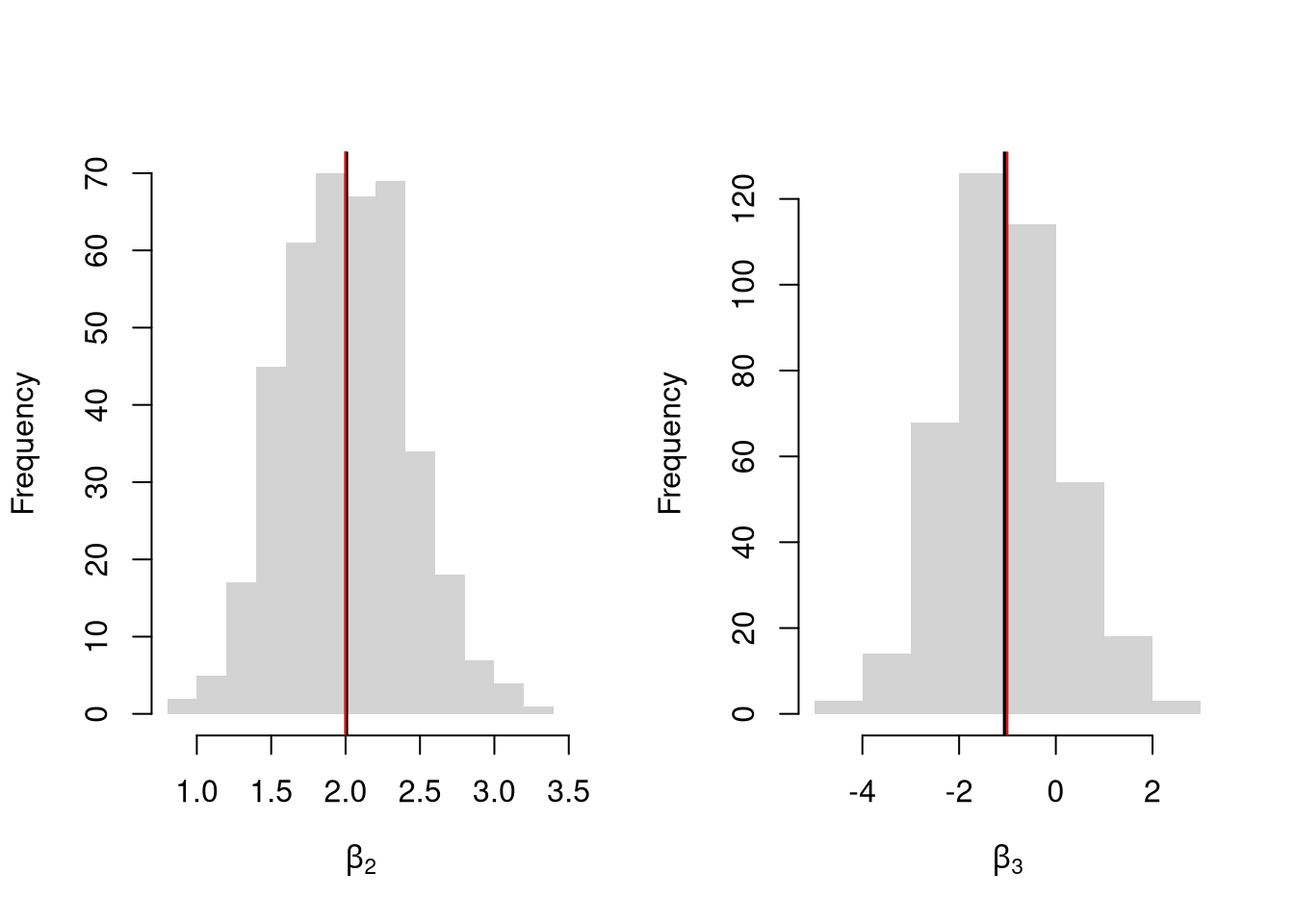

Simulate the distribution of coefficients under a correctly specified model. Interpret the average.

Code

N <- 30

B <- c(10, 2, -1)

Coefs <- sapply(1:400, function(sim){

x1 <- runif(N, 0, 5)

x2 <- rbinom(N,1,.7)

X <- cbind(1,x1,x2)

e <- rnorm(N,0,3)

Y <- X%*%B + e

dat <- data.frame(Y,x1,x2)

coef(lm(Y~x1+x2, data=dat))

})

par(mfrow=c(1,2))

for(i in 2:3){

hist(Coefs[i,], xlab=bquote(beta[.(i)]), main='', border=NA)

abline(v=mean(Coefs[i,]), lwd=2)

abline(v=B[i], col=rgb(1,0,0))

}

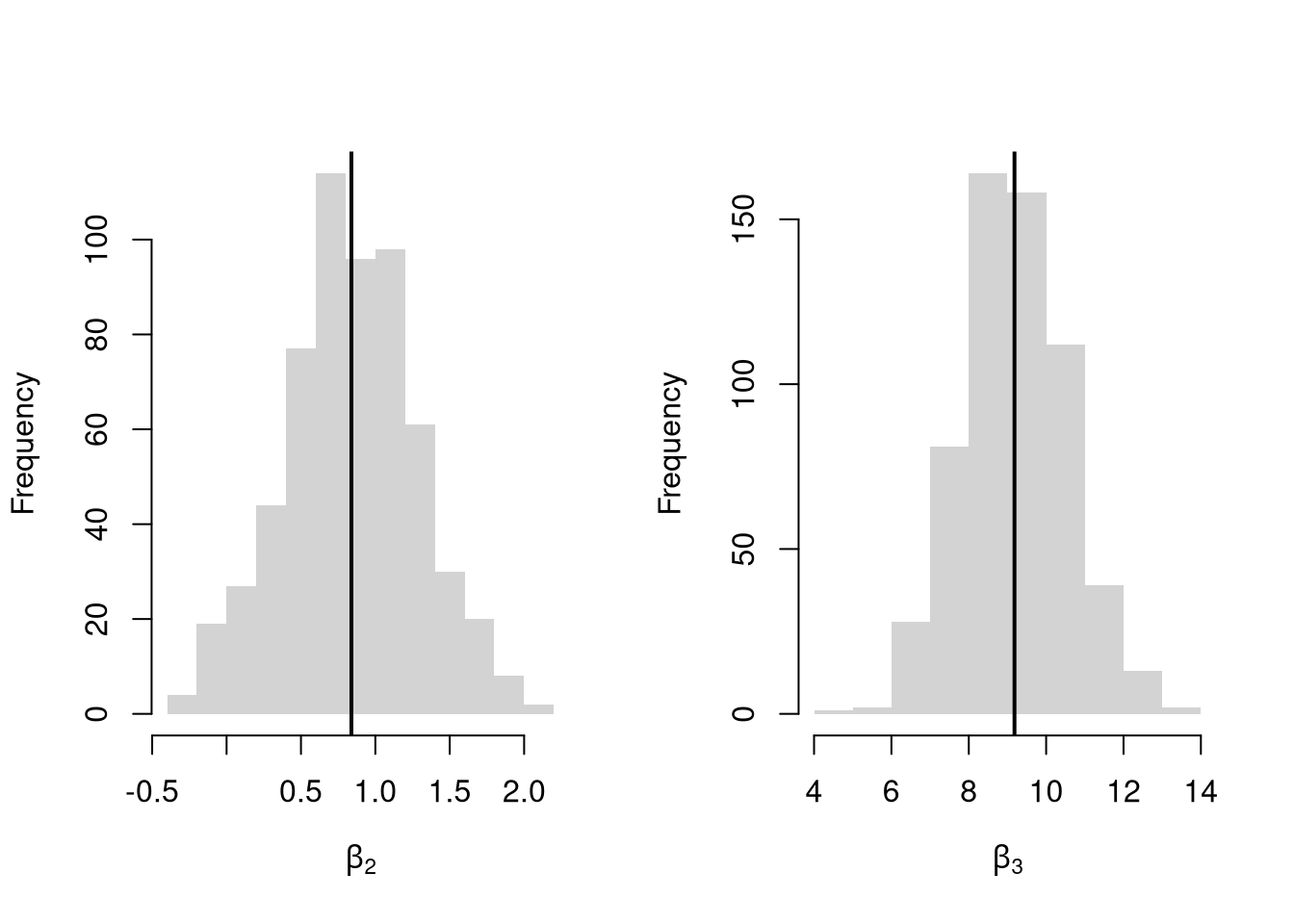

Many economic phenomena are nonlinear, even when including potential transforms of \(Y\) and \(X\). Sometimes the linear model may still be a good or even great approximation. But sometimes not, and it is hard to know ex-ante. Examine the distribution of coefficients under this mispecified model and try to interpret the average.

Code

N <- 30

Coefs <- sapply(1:600, function(sim){

x2 <- runif(N, 0, 5)

x3 <- rbinom(N,1,.7)

e <- rnorm(N,0,3)

Y <- 10*x3 + 2*log(x2)^x3 + e

dat <- data.frame(Y,x2,x3)

coef(lm(Y~x2+x3, data=dat))

})

par(mfrow=c(1,2))

for(i in 2:3){

hist(Coefs[i,], xlab=bquote(beta[.(i)]), main='', border=NA)

abline(v=mean(Coefs[i,]), col=1, lwd=2)

}

In general, you can interpret your regression coefficients as “adjusted correlations”. There are (many) tests for whether the relationships in your dataset are actually additively separable and linear.

10.6 Further Reading

For OLS, see

- https://bookdown.org/josiesmith/qrmbook/linear-estimation-and-minimizing-error.html

- https://www.econometrics-with-r.org/4-lrwor.html

- https://www.econometrics-with-r.org/6-rmwmr.html

- https://www.econometrics-with-r.org/7-htaciimr.html

- https://bookdown.org/ripberjt/labbook/bivariate-linear-regression.html

- https://bookdown.org/ripberjt/labbook/multivariable-linear-regression.html

- https://online.stat.psu.edu/stat462/node/137/

- https://book.stat420.org/

- Hill, Griffiths & Lim (2007), Principles of Econometrics, 3rd ed., Wiley, S. 86f.

- Verbeek (2004), A Guide to Modern Econometrics, 2nd ed., Wiley, S. 51ff.

- Asteriou & Hall (2011), Applied Econometrics, 2nd ed., Palgrave MacMillan, S. 177ff.

- https://online.stat.psu.edu/stat485/lesson/11/

To derive OLS coefficients in Matrix form, see

- https://jrnold.github.io/intro-methods-notes/ols-in-matrix-form.html

- https://www.fsb.miamioh.edu/lij14/411_note_matrix.pdf

- https://web.stanford.edu/~mrosenfe/soc_meth_proj3/matrix_OLS_NYU_notes.pdf

For fixed effects, see

There are also random effects: the factor variable comes from a distribution that is uncorrelated with the regressors. This is rarely used in economics today, however, and are mostly included for historical reasons and special cases where fixed effects cannot be estimated due to data limitations.↩︎