Code

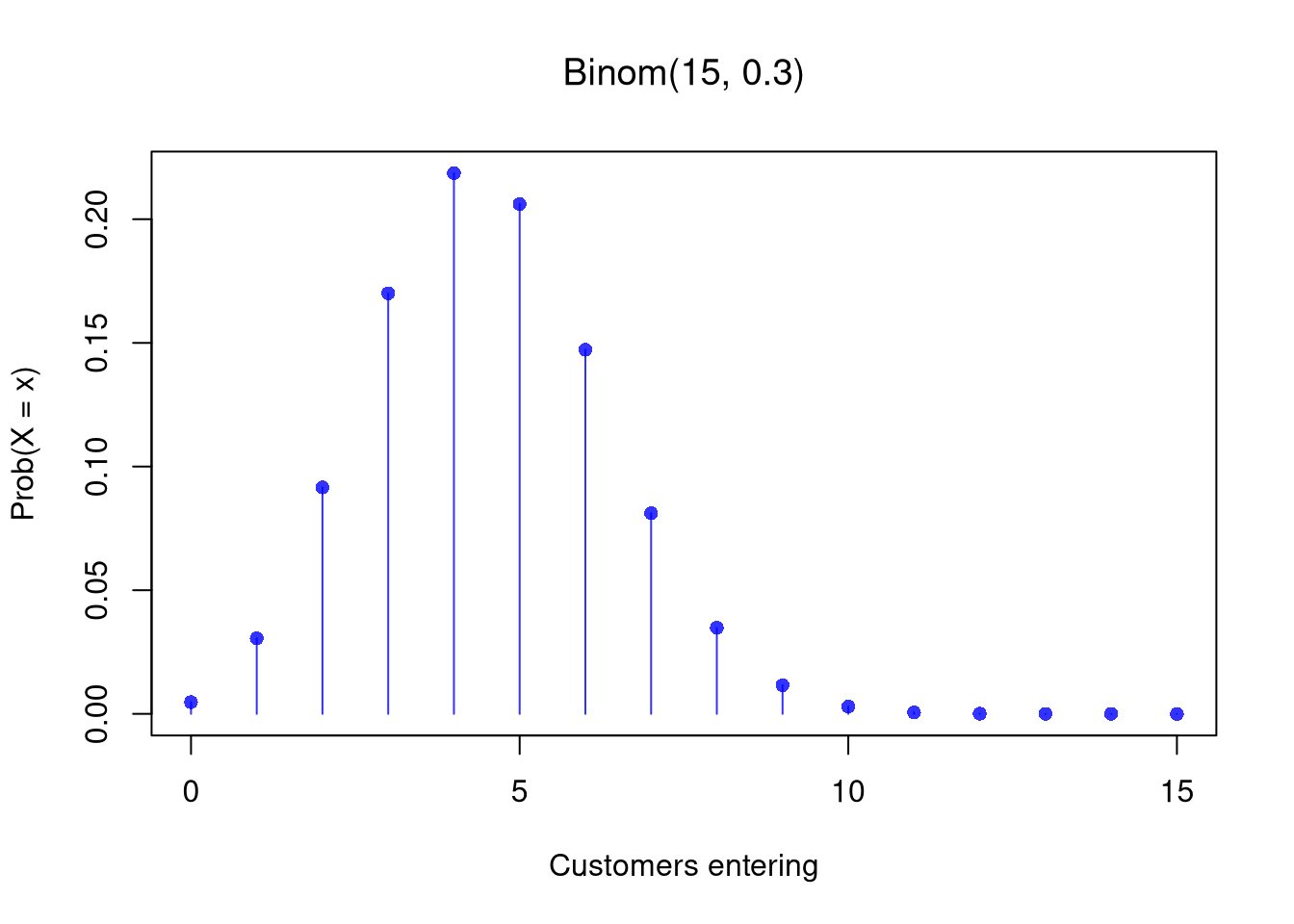

## Store: 15 passers-by, 30% chance each enters

n <- 15

p <- 0.3

# PMF plot

x <- seq(0, n)

f_x <- dbinom(x, n, p)

plot(x, f_x, type = 'h', col = rgb(0, 0, 1, .8),

main=NA, xlab = 'Customers entering', ylab = 'Prob(X = x)')

title(bquote(paste('Binom(', .(n), ', ', .(p), ')')), font.main=1)

points(x, f_x, pch = 16, col = rgb(0, 0, 1, .8))

Code

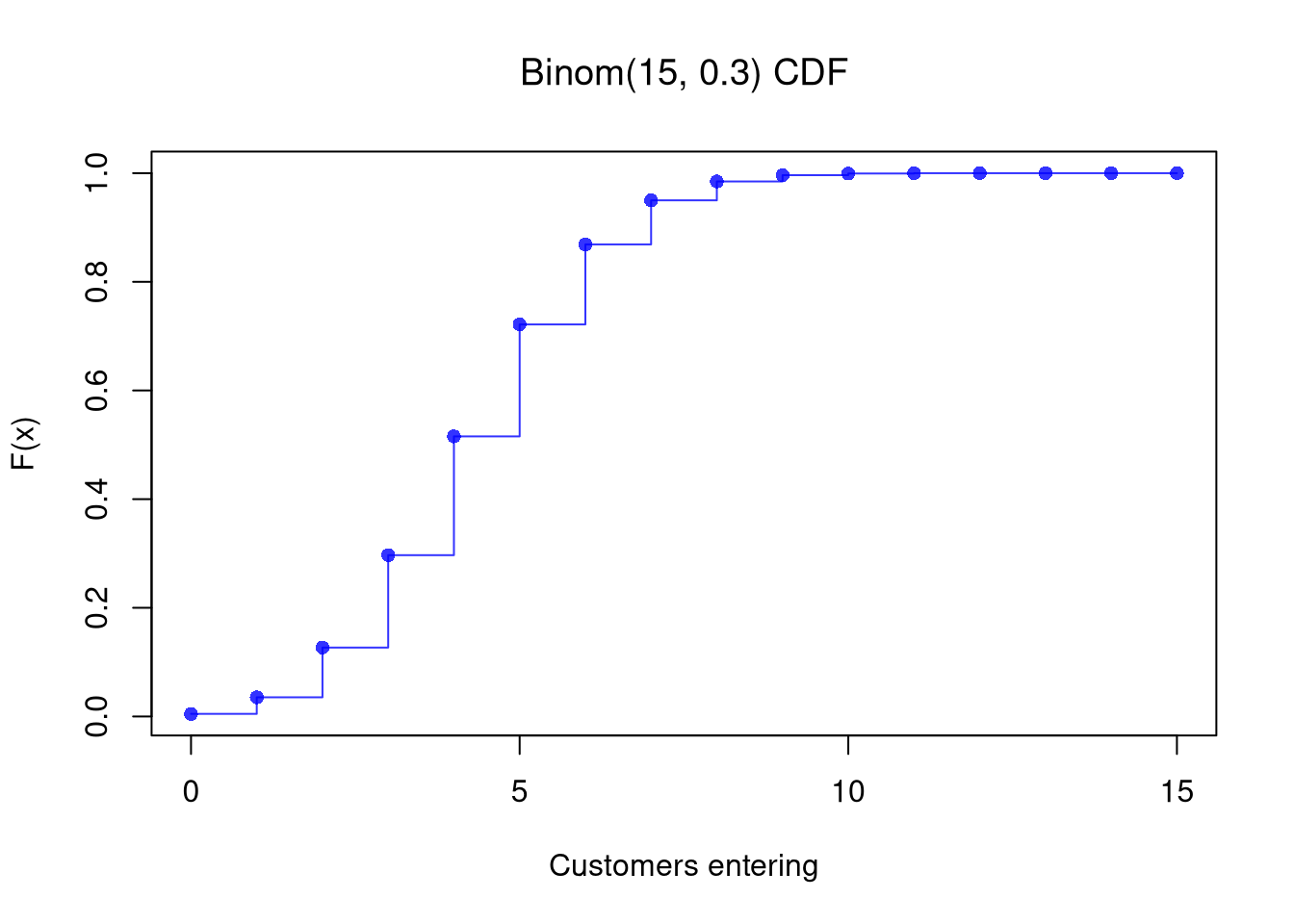

## Theoretical CDF

F_x <- pbinom(x, n, p)

plot(x, F_x, type='s', col=rgb(0, 0, 1, .8),

xlab = 'Customers entering', ylab = 'F(x)', main=NA)

points(x, F_x, pch=16, col=rgb(0, 0, 1, .8))

title(bquote(paste('Binom(', .(n), ', ', .(p), ') CDF')), font.main=1)